mirror of

https://github.com/mudler/LocalAI.git

synced 2024-06-07 19:40:48 +00:00

Update README

This commit is contained in:

parent

3dd632fd5a

commit

730645b3c6

30

README.md

30

README.md

@ -9,21 +9,29 @@

|

||||

|

||||

[](https://discord.gg/uJAeKSAGDy)

|

||||

|

||||

**LocalAI** is a drop-in replacement REST API that’s compatible with OpenAI API specifications for local inferencing. It allows you to run LLMs (and not only) locally or on-prem with consumer grade hardware, supporting multiple model families that are compatible with the ggml format. Does not require GPU.

|

||||

[Documentation website](https://localai.io/)

|

||||

|

||||

**LocalAI** is a drop-in replacement REST API that's compatible with OpenAI API specifications for local inferencing. It allows you to run LLMs (and not only) locally or on-prem with consumer grade hardware, supporting multiple model families that are compatible with the ggml format. Does not require GPU.

|

||||

|

||||

For a list of the supported model families, please see [the model compatibility table](https://localai.io/model-compatibility/index.html#model-compatibility-table).

|

||||

|

||||

In a nutshell:

|

||||

|

||||

- Local, OpenAI drop-in alternative REST API. You own your data.

|

||||

- NO GPU required. NO Internet access is required either. Optional, GPU Acceleration is available in `llama.cpp`-compatible LLMs. [See building instructions](https://localai.io/basics/build/index.html).

|

||||

- Supports multiple models, Audio transcription, Text generation with GPTs, Image generation with stable diffusion (experimental)

|

||||

- Once loaded the first time, it keep models loaded in memory for faster inference

|

||||

- Doesn't shell-out, but uses C++ bindings for a faster inference and better performance.

|

||||

- NO GPU required. NO Internet access is required either

|

||||

- Optional, GPU Acceleration is available in `llama.cpp`-compatible LLMs. See also the [build section](https://localai.io/basics/build/index.html).

|

||||

- Supports multiple models:

|

||||

- 🗣 Text to Audio 🎺🆕

|

||||

- 🔈 Audio to Text (Audio transcription with `whisper.cpp`)

|

||||

- 📖 Text generation with GPTs (`llama.cpp`, `gpt4all.cpp`, ... and more)

|

||||

- 🎨 Image generation with stable diffusion

|

||||

- 🏃 Once loaded the first time, it keep models loaded in memory for faster inference

|

||||

- ⚡ Doesn't shell-out, but uses C++ bindings for a faster inference and better performance.

|

||||

|

||||

LocalAI was created by [Ettore Di Giacinto](https://github.com/mudler/) and is a community-driven project, focused on making the AI accessible to anyone. Any contribution, feedback and PR is welcome!

|

||||

LocalAI was created by [Ettore Di Giacinto](https://github.com/mudler/) and is a community-driven project, focused on making the AI accessible to anyone. Any contribution, feedback and PR is welcome!

|

||||

|

||||

See the [Getting started](https://localai.io/basics/getting_started/index.html) and [examples](https://github.com/go-skynet/LocalAI/tree/master/examples/) sections to learn how to use LocalAI. For a list of curated models check out the [model gallery](https://localai.io/models/).

|

||||

|

||||

See the [examples](https://github.com/go-skynet/LocalAI/tree/master/examples/) on how to integrate LocalAI with other popular projects:

|

||||

|

||||

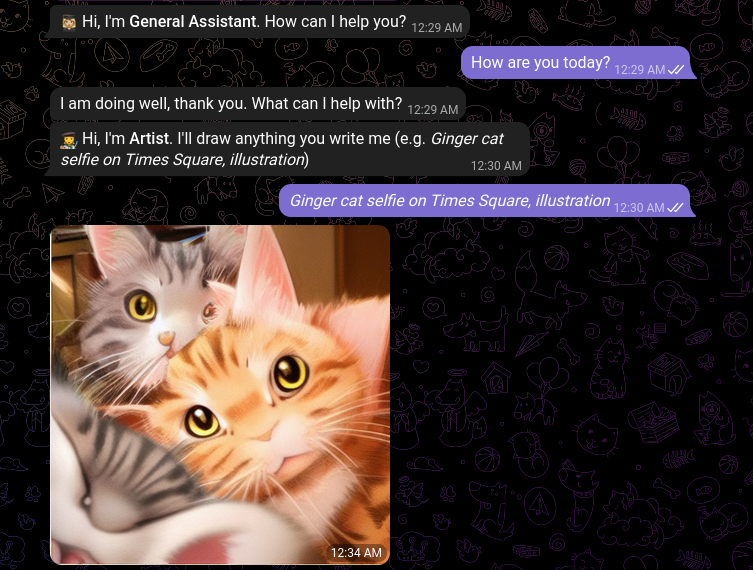

| [ChatGPT OSS alternative](https://github.com/go-skynet/LocalAI/tree/master/examples/chatbot-ui) | [Image generation](https://localai.io/api-endpoints/index.html#image-generation) |

|

||||

|------------------------------------------------------------------------------------------------------------------------|------------------------------------------------------------------------------------------------------------------------|

|

||||

@ -33,10 +41,9 @@ See the [examples](https://github.com/go-skynet/LocalAI/tree/master/examples/) o

|

||||

|------------------------------------------------------------------------------------------------------------------------|------------------------------------------------------------------------------------------------------------------------|

|

||||

| | |

|

||||

|

||||

See the [Getting started](https://localai.io/basics/getting_started/index.html) and [examples](https://github.com/go-skynet/LocalAI/tree/master/examples/) sections to learn how to use LocalAI. For a list of curated models check out the [model gallery](https://localai.io/models/).

|

||||

|

||||

## News

|

||||

|

||||

- 🔥🔥🔥 28-06-2023: **v1.20.0**: Added text to audio and gallery huggingface repositories! [Release notes](https://localai.io/basics/news/index.html#-28-06-2023-__v1200__-) [Changelog](https://github.com/go-skynet/LocalAI/releases/tag/v1.20.0)

|

||||

- 🔥🔥🔥 19-06-2023: **v1.19.0**: CUDA support! [Release notes](https://localai.io/basics/news/index.html#-19-06-2023-__v1190__-) [Changelog](https://github.com/go-skynet/LocalAI/releases/tag/v1.19.0)

|

||||

- 🔥🔥🔥 06-06-2023: **v1.18.0**: Many updates, new features, and much more 🚀, check out the [Release notes](https://localai.io/basics/news/index.html#-06-06-2023-__v1180__-)!

|

||||

- 29-05-2023: LocalAI now has a website, [https://localai.io](https://localai.io)! check the news in the [dedicated section](https://localai.io/basics/news/index.html)!

|

||||

@ -188,7 +195,10 @@ Feel free to open up a PR to get your project listed!

|

||||

- [x] Support for embeddings

|

||||

- [x] Support for audio transcription with https://github.com/ggerganov/whisper.cpp

|

||||

- [x] GPU/CUDA support ( https://github.com/go-skynet/LocalAI/issues/69 )

|

||||

- [ ] Enable automatic downloading of models from a curated gallery, with only free-licensed models, directly from the webui.

|

||||

- [X] Enable automatic downloading of models from a curated gallery

|

||||

- [ ] Enable automatic downloading of models from HuggingFace

|

||||

- [ ] Enable gallery management directly from the webui.

|

||||

- [ ] 🔥 OpenAI functions: https://github.com/go-skynet/LocalAI/issues/588

|

||||

|

||||

## Star history

|

||||

|

||||

|

||||

Loading…

Reference in New Issue

Block a user